LLMWise vs MARS8 Text to Speech AI Models

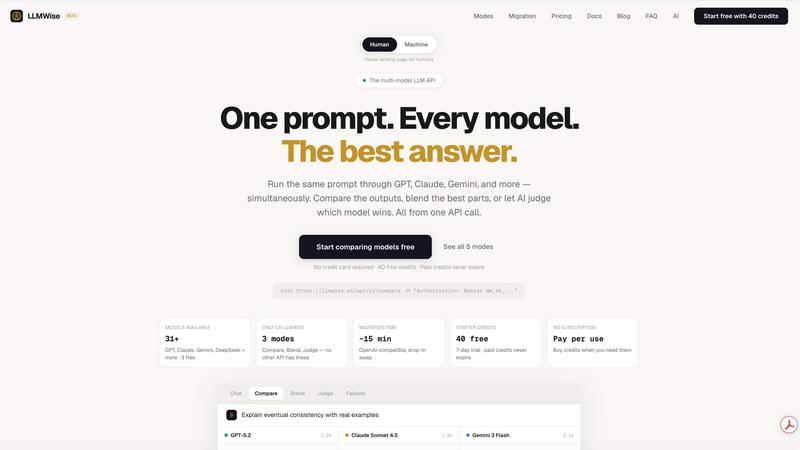

LLMWise

LLMWise offers unified access to 62+ AI models with smart routing, enabling seamless comparison and cost-effective.

Last updated: February 28, 2026

MARS8 Text to Speech AI Models

MARS8's production-grade TTS models deliver unmatched reliability for every voice and language.

Last updated: February 28, 2026

Visual Comparison

LLMWise

MARS8 Text to Speech AI Models

Feature Comparison

LLMWise

Smart Routing

LLMWise employs intelligent routing technology that automatically selects the best model based on your prompt's requirements. This means that code-related queries are sent to GPT, creative writing tasks are routed to Claude, and translation requests go to Gemini, ensuring that you always receive the most accurate and relevant responses.

Compare & Blend

The compare feature allows users to run prompts across different models simultaneously, providing a clear side-by-side view of the results. With the blending feature, LLMWise intelligently combines the best parts of multiple outputs into one cohesive answer, elevating the quality of the final response beyond what any single model could achieve.

Circuit-Breaker Failover

Resilience is built into LLMWise with its circuit-breaker failover mechanism. If one of the AI providers experiences downtime, LLMWise automatically reroutes your requests to backup models, preventing any disruption in service and ensuring your application stays operational.

Test & Optimize

LLMWise provides robust benchmarking and optimization tools that allow developers to run batch tests and evaluate the performance of different models. Users can set optimization policies based on speed, cost, or reliability to fine-tune their applications and ensure they are getting the best value for their usage.

MARS8 Text to Speech AI Models

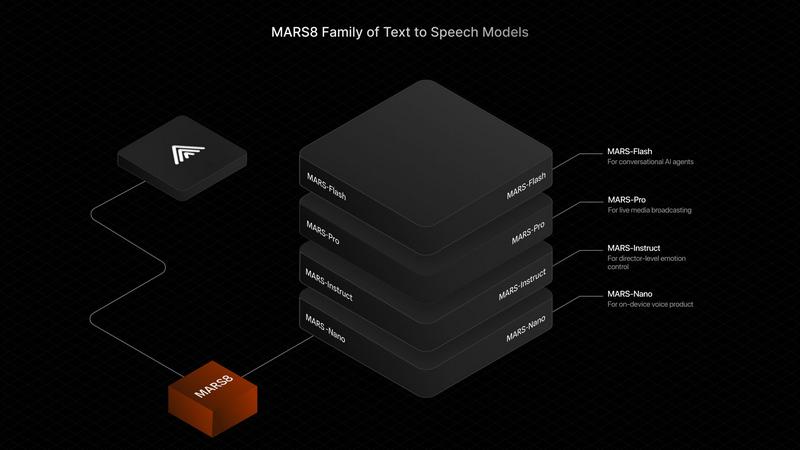

The MARS8 Model Family

MARS8 rejects the generic model approach. It ships as a specialized family where each model is engineered to dominate a specific performance frontier. This includes MARS-Flash for the lowest possible latency, MARS-Pro for the perfect balance of speed and fidelity, MARS-Instruct for granular emotional and director-level control, and MARS-Nano for high-quality, on-device processing. This strategic specialization ensures developers are never forced to compromise.

Cloud-Agnostic Deployment

MARS8 launches as the first TTS model family available natively on all top compute platforms, including AWS and Google Cloud. This revolutionary approach eliminates the "API tax" and vendor lock-in, giving developers and enterprises the freedom to deploy on their terms, scale with their preferred infrastructure, and optimize costs without being trapped by a single provider's ecosystem.

Global Language & Voice Coverage

The system supports a vast array of languages and dialects, designed to cover 99% of the world's speaking population. It offers both Premium and Standard tiers for languages like English, Hindi, Spanish, French, Japanese, Arabic, and many more, ensuring authentic, high-quality speech synthesis for a truly global audience and user base.

Enterprise-Grade Performance & Security

Benchmarked as the world's leading TTS model, MARS8 sets new baselines in quality and speaker similarity metrics. It is built for mission-critical, high-scale production environments, backed by SOC 2 Type II compliance. This combination of proven, superior performance and rigorous security standards makes it fit for enterprise deployment where reliability is non-negotiable.

Use Cases

LLMWise

Rapid Prototyping

Developers can use LLMWise to quickly prototype applications by leveraging its extensive library of models. With 30 free models available, users can test various prompts and functionalities without incurring costs, facilitating rapid development cycles.

Edge Case Handling

In scenarios where specific prompts may challenge a single model, LLMWise's compare mode allows developers to test the same input across different models. This ensures that edge cases are handled effectively, saving valuable debugging time.

Cost Optimization

By integrating LLMWise, teams can cut down on expenses associated with multiple AI subscriptions. With the ability to bring your own keys and only pay for what you use, businesses can optimize their AI spending without sacrificing quality.

Enhanced Content Creation

Content creators can harness LLMWise to produce high-quality material by blending outputs from several models. This enables the creation of richer and more nuanced content, making it ideal for marketing, storytelling, and other creative endeavors.

MARS8 Text to Speech AI Models

Live Broadcasting & Real-Time Translation

This is MARS8's proving ground. It is engineered for live sports, news, and events where real-time voiceovers and translations must be flawless and instantaneous. The model's reliability ensures that when millions are watching, the audio delivery is perfectly synchronized and accurate, with zero room for error.

Conversational AI & Voice Agents

For real-time voice agents in contact centers, virtual assistants, and interactive AI, MARS-Flash delivers the ultra-low latency required for natural, fluid conversations. It minimizes response time (TTFB) so interactions feel human and immediate, eliminating awkward pauses that break user immersion.

Content Dubbing & Audiobook Production

MARS-Pro excels in media production, offering the ideal blend of speed and high-fidelity audio output. It enables rapid, high-quality dubbing for video content and generates rich, expressive narration for audiobooks and long-form content, capturing subtle emotional tones and maintaining consistent voice profiles.

On-Device & Edge Applications

MARS-Nano brings high-quality TTS capabilities directly to devices, enabling applications in IoT, mobile apps, and other environments where network connectivity is limited, latency is critical, or data privacy is paramount. This allows for responsive, private voice interactions without relying on cloud APIs.

Overview

About LLMWise

LLMWise is an innovative API solution designed for developers who want seamless access to the best large language models (LLMs) available. It consolidates multiple AI providers, including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek, into a single API endpoint. This allows users to intelligently route their prompts to the model best suited for the task at hand, eliminating the complexity of managing numerous AI subscriptions. Whether you require code assistance, creative writing, or translation, LLMWise enables you to select the optimal model automatically. The platform not only offers side-by-side comparisons of outputs from various models but also allows blending of responses to create stronger answers. Moreover, LLMWise features built-in resilience with failover capabilities, ensuring that your applications remain operational even if a provider goes down. With flexible pricing and no subscription fees, LLMWise is positioned to be the go-to solution for developers seeking to harness the full potential of AI without the hassle of managing multiple subscriptions.

About MARS8 Text to Speech AI Models

MARS8 is the world's leading family of production-grade text-to-speech AI models, engineered for the most demanding real-world applications. Born from the crucible of live sports and news broadcasting, where a single mistake can be seen by millions, MARS8 delivers rock-solid reliability and unmatched quality. It shatters the one-size-fits-all approach by offering a specialized family of models, ensuring every use case—from ultra-low-latency conversational agents to emotionally rich audiobook narration—gets a purpose-built solution. Designed for developers, enterprises, and infrastructure providers, its core value proposition is battle-tested performance, global language support covering 99% of the world's speaking population, and liberation from vendor lock-in as the first TTS model family natively available on every major cloud platform. When live doesn't lie, you build with MARS8.

Frequently Asked Questions

LLMWise FAQ

How does LLMWise select the right model for my prompt?

LLMWise uses intelligent routing technology that analyzes your prompt and matches it with the most appropriate model based on its strengths and capabilities.

Is there a cost to use LLMWise?

LLMWise operates on a pay-per-use model, which means you only pay for what you use. Additionally, you start with 20 free credits that never expire, allowing you to explore the service without financial commitment.

Can I use my own API keys with LLMWise?

Yes, LLMWise supports Bring Your Own Key (BYOK), enabling users to integrate their existing API keys for various models, which can lead to significant cost savings.

What happens if one of the AI providers goes down?

LLMWise includes a circuit-breaker failover feature that automatically reroutes your requests to backup models if a primary provider is unavailable, ensuring uninterrupted service for your applications.

MARS8 Text to Speech AI Models FAQ

What makes MARS8 different from other TTS APIs?

MARS8 is not a single, compromised model. It is a battle-tested family of specialized models, each engineered to win in specific scenarios like ultra-low latency or emotional control. Furthermore, it's the first major TTS model natively available on all major clouds, freeing you from vendor lock-in and the associated API tax.

Which MARS8 model should I use for my application?

Choose based on your primary need: Use MARS-Flash for real-time conversational agents. Select MARS-Pro for high-fidelity dubbing and audiobooks. Opt for MARS-Instruct for projects requiring precise emotional or directorial control. For on-device applications, MARS-Nano is the solution.

How does MARS8 achieve such high performance in benchmarks?

MARS8 was built for the extreme demands of live content, where failure is not an option. This production-first mindset, combined with a specialized model architecture for different tasks, allows it to outperform generic models in key metrics like speech quality (PQ), content enjoyment (CE), and speaker similarity.

What does "cloud-agnostic" or "natively available on all clouds" mean?

It means you can deploy and run the MARS8 models directly on infrastructure from AWS, Google Cloud, and other major providers, not just through a proprietary API. You manage the compute, giving you full control over scaling, costs, and integration with your existing cloud stack, avoiding dependency on a single vendor.

Alternatives

LLMWise Alternatives

LLMWise is a unified access platform for large language models (LLMs), designed to streamline AI integration for developers. By consolidating access to major providers like OpenAI, Anthropic, and Google, LLMWise simplifies the process of selecting the best model for various tasks. Users often seek alternatives due to factors such as pricing, specific feature requirements, or the need for a platform that better aligns with their project goals and technical needs. When evaluating alternatives, it’s crucial to consider aspects like model variety, routing intelligence, reliability, and how well the solution integrates into existing workflows.

MARS8 Text to Speech AI Models Alternatives

MARS8 Text to Speech AI Models represent the definitive standard in AI voice generation, built specifically for high-stakes, real-time applications like live sports and news broadcasting. This category demands absolute reliability and speed, where any error is instantly visible to a global audience. Developers often seek alternatives to MARS8 for various reasons, including budget constraints, specific feature requirements not covered by a generalist model, or the need for deployment on a particular cloud platform or on-premise infrastructure. The quest for a different balance between cost, latency, and voice quality is a common driver. When evaluating any alternative, key criteria should include real-time performance metrics, the emotional range and naturalness of the voice output, language support breadth, and the flexibility of the deployment model. The right choice hinges on whether the solution can handle the pressure of your specific use case, from conversational agents to professional media production.