LongCat Video Avatar

LongCat Video Avatar creates stunning audio-driven avatars for seamless long-form video generation with unmatched.

About LongCat Video Avatar

LongCat Video Avatar is a groundbreaking audio-driven avatar model specifically engineered for the seamless generation of long-duration videos. Leveraging the powerful LongCat-Video architecture, this innovative tool stands out by delivering extraordinarily realistic lip synchronization, fluid human dynamics, and unwavering identity consistency, even across extended video sequences. It caters to creators demanding professional quality without the complexities typically associated with video production. Ideal for a diverse range of applications, from digital acting to virtual presentations, LongCat Video Avatar transforms audio input into dynamic video output, ensuring that every performance remains visually coherent from beginning to end. By prioritizing realism and stability, it empowers users to create engaging content that captivates audiences, making it a vital choice for anyone looking to push the boundaries of video generation technology.

Features of LongCat Video Avatar

Unified Multi-Mode Generation

LongCat Video Avatar excels with its integrated approach, supporting Audio-Text-to-Video (AT2V), Audio-Text-Image-to-Video (ATI2V), and Audio-conditioned Video Continuation. This comprehensive framework allows creators to switch seamlessly between various modes, simplifying workflows and enhancing creative possibilities.

Long-Sequence Stability at Scale

Through revolutionary Cross-Chunk Latent Stitching technology, LongCat Video Avatar ensures that pixel perfection and visual clarity are maintained across lengthy video segments. This cutting-edge feature prevents degradation and visual noise, enabling creators to produce high-quality content regardless of duration.

Natural Human Dynamics Beyond Speech

With its Disentangled Unconditional Guidance mechanism, LongCat Video Avatar decouples audio signals from motion dynamics. This results in lifelike gestures and idle movements, making avatars appear more human-like and natural even in silent moments, elevating the overall viewing experience.

Identity Preservation Without Copy-Paste Artifacts

LongCat Video Avatar utilizes Reference Skip Attention to maintain character identity and avoid the common pitfalls of rigid appearance seen in other reference-heavy models. This ensures that avatars retain their unique traits and characteristics throughout the video, enhancing viewer engagement.

Use Cases of LongCat Video Avatar

Actor / Actress

LongCat Video Avatar revolutionizes the acting landscape by enabling the generation of expressive performances where lip movements are perfectly synchronized with dialogue. This technology allows for the creation of long cinematic scenes with consistent facial identity, enhancing storytelling.

Singer

For musical performances, LongCat Video Avatar provides rhythm-aware body motion that aligns seamlessly with vocal tracks. This feature enables singers to produce engaging performances, maintaining artistic integrity without the risk of motion degradation over time.

Podcast & Long Interviews

In the realm of podcasts and extended interviews, LongCat Video Avatar supports hours-long speaking videos while ensuring that appearance, gestures, and visual clarity remain consistent. This capability makes it ideal for content creators looking to engage audiences over longer formats.

Sales & Corporate Presentations

LongCat Video Avatar empowers businesses by producing professional AI presenters that can manage silent moments naturally. This eliminates awkward pauses or robotic stillness, making presentations more engaging and interactive, which is crucial in corporate environments.

Frequently Asked Questions

What makes LongCat Video Avatar different from other avatar models?

LongCat Video Avatar stands out due to its advanced architecture that emphasizes long-duration video generation, ensuring high-quality output and natural human dynamics that other models often struggle to deliver.

Can LongCat Video Avatar support multiple characters in a single video?

Yes, LongCat Video Avatar natively supports multi-character interactions, allowing for complex conversations and scenarios with individual identity preservation, all while maintaining visual coherence.

What is Cross-Chunk Latent Stitching?

Cross-Chunk Latent Stitching is a proprietary technology that prevents pixel degradation and visual noise during long video sequences, ensuring that the quality remains seamless and high across extensive content.

Is LongCat Video Avatar suitable for real-world production pipelines?

Absolutely. LongCat Video Avatar is designed with efficient high-resolution inference capabilities, making it practical for deployment in real-world production environments, allowing for rapid iteration and scalability.

Top Alternatives to LongCat Video Avatar

Gemini Omni

Gemini Omni crushes the clunky tool-switching grind by merging multimodal prompting, chat editing, and visual remix into one seamless creation flow.

CoffeeTrans

CoffeeTrans beats generic tools by delivering Netflix-level subtitles and multi-language translations from any audio or video file in one click.

Outfit Anyone

Outfit Anyone is an AI-powered virtual try-on tool that lets you see any outfit on any body, delivering ultra-realistic results in seconds.

VisoMaster

VisoMaster crushes DeepFaceLab with real-time AI face swapping for videos and photos, delivering pro results instantly.

Happy Horse AI

Transform text and images into stunning cinematic videos with synchronized audio using Happy Horse AI's advanced video generation technology.

MuseSpark AI

MuseSpark AI is the battle-tested all-in-one design toolkit that crushes every other platform by transforming any spark of inspiration into stunning.

VO4 AI

VO4 AI crushes complex editors to turn text or images into viral 6-second videos that dominate social feeds.

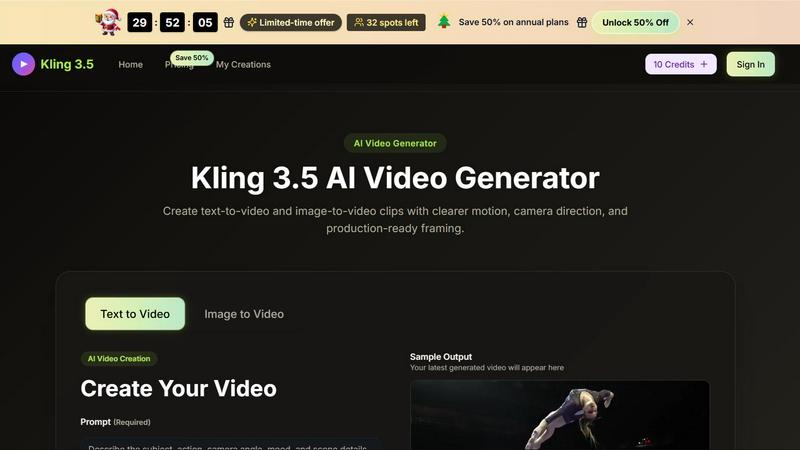

Kling 3.5

Kling 3.5 transforms your text and images into stunning videos with precise motion and professional framing, perfect for any project.